Short Courses

All the short courses can be taken either in the conference classroom or virtually; some instructors will be onsite.

June 7, 09:00-12:00

- Computational Drug Development with Real World Data Using Machine Learning and Statistics; onsite + virtual

- Introduction to Combining Information for Causal Inference; onsite

- Statistical Topics in Outcomes Research: Patient-Reported Outcomes, Meta-Analysis, and Health Economics; virtual; 9:00-12:30

June 7, 13:00-16:00

- A Brief History of Adaptive 2-In-1 Designs; virtual

- Statistical Translation of Extrapolation: Demonstrating Efficacy and Safety of Investigational Medicines in Pediatric Populations; onsite + virtual

A Brief History of Adaptive 2-In-1 Designs

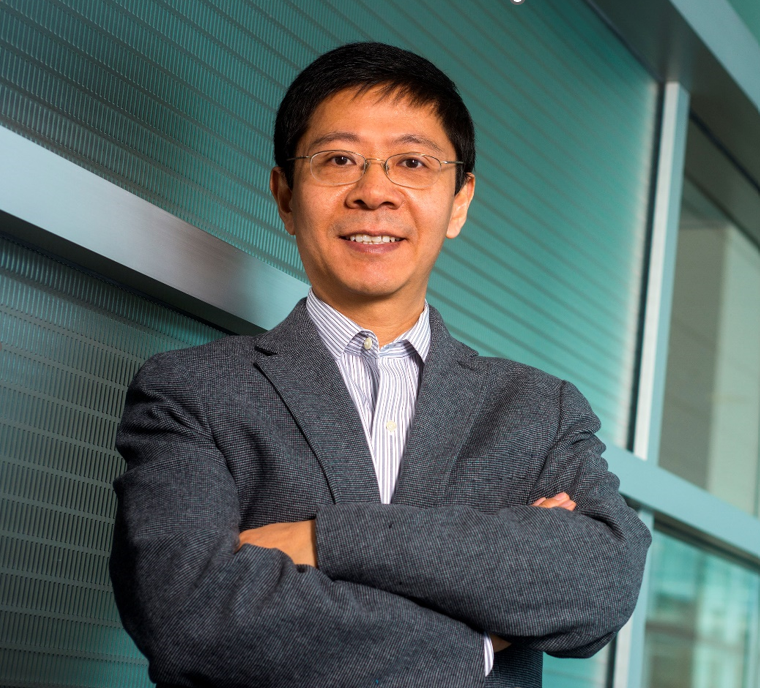

Instructor: Dr. Cong Chen & Dr. Eric (Pingye) Zhang

Dr. Cong Chen is Executive Director of Early Oncology Development Statistics at Merck & Co., Inc. He joined Merck in 1999 after graduating from Iowa State University with a Ph.D. in Statistics. He also holds an MS degree in Mathematics from Indiana University at Bloomington and a BS degree in Probability and Statistics from Peking University, PR China.

Dr. Cong Chen is Executive Director of Early Oncology Development Statistics at Merck & Co., Inc. He joined Merck in 1999 after graduating from Iowa State University with a Ph.D. in Statistics. He also holds an MS degree in Mathematics from Indiana University at Bloomington and a BS degree in Probability and Statistics from Peking University, PR China.

As head of the group, he oversees the statistical support of oncology early clinical development and translational biomarker research at Merck. Prior to taking the role in March 2016, he led the statistical support for the development of pembrolizumab (KEYTRUDA), a paradigm changing anti-PD-1 immunotherapy, and played a key role in accelerating its regulatory approvals.

He is a Fellow of American Statistical Association, an Associate Editor of Statistics in Biopharmaceutical Research, a member of Cancer Clinical Research Editorial Board and a leader of the DIA Innovative Design Working Group. He has published over 100 papers and 10 book chapters on design and analysis of clinical trials, has given multiple short courses on the subject at statistical conferences and was invited to give oral presentations at multiple medical conferences on design strategies for oncology drug development.

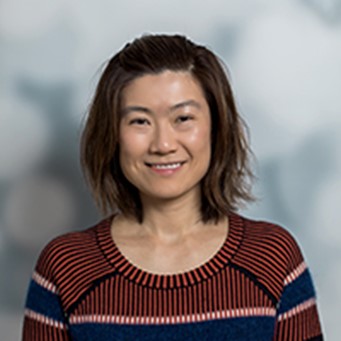

Dr. Eric (Pingye) Zhang is an associate director of Biostatistics at BeiGene supporting Hematology clinical trials. Before joining BeiGene, he worked as a statistician at Merck supporting late-stage Oncology trials for pembrolizumab (KEYTRUDA) in renal cell carcinoma, NSCLC and Head & Neck cancer. He obtained a PhD degree in Biostatistics from University of Southern California in 2016. His research interests include adaptive design, subgroup analysis and basket trial design and analysis. He is leading a sub-team in the DIA Innovative Design Working Group for research on 2-in-1 adaptive design with dose selection.

Abstract

The 2-in-1 adaptive design [Chen et al. 2018] is an adaptive design strategy that allows a study to choose the optimal path to success based on interim trial data, often without any need to pay the penalty for adaptation. In particular, when it is applied to an adaptive randomized-controlled Phase 2/3 oncology trial, the data at end of Phase 2 can be analyzed at the full alpha level in case of no expansion to Phase 3, or at end of Phase 3 at the full alpha level in case of expansion. As FDA has repeatedly expressed growing interest in relying more on randomized-controlled trial data for regulatory decisions, a positive Phase 2 outcome may be used to justify for accelerated approval, while a positive Phase 3 outcome can be naturally used to justify for regular approval. This new perspective provides a much-needed safety net in case of a false No-Go decision to Phase 3. The endpoint used for expansion decision can be the same as or different from the primary endpoints, and there is no restriction on the expansion bar. Due to its flexibility and robustness, the design has drawn tremendous attention to academic researchers and industry practitioners.

In this short course, we will review the history of the 2-in-1 design in the context of evolving oncology drug development paradigm, survey the current landscape of the research field, and shed light on its future directions. We will also present case studies of its applications and discuss practical issues.

Computational Drug Development with Real World Data Using Machine Learning and Statistics

Instructors: Dr. Fei Wang & Dr. Kun Chen

Fei Wang is currently an Associate Professor of Health Informatics in Department of Population Health Sciences, Weill Cornell Medicine, Cornell University. His major research interest is machine learning and its applications in health data science. He has published more than 300 papers in AI and medicine, which have received more than 23K citations Google Scholar. His H-index is 73. His papers have won 8 best paper awards at top international conferences on data mining and medical informatics. His team won the championship of the PTHrP results prediction challenge organized by the American Association of Clinical Chemistry in 2022, NIPS/Kaggle Challenge on Classification of Clinically Actionable Genetic Mutations in 2017 and Parkinson’s Progression Markers’ Initiative data challenge organized by Michael J. Fox Foundation in 2016. Dr. Wang is the recipient of the NSF CAREER Award in 2018, the inaugural research leadership award in IEEE International Conference on Health Informatics (ICHI) 2019. Dr. Wang is a Fellow of the American Medical Informatics Association (AMIA), a Fellow of the International Academy of Health Sciences and Informatics (IAHSI), a Fellow of the American College of Medical Informatics (ACMI), and a Distinguished Member of the Association for Computing Machinery (ACM).

Kun Chen is an Associate Professor in the Department of Statistics at the University of Connecticut (UConn) and a Research Fellow at the Center for Population Health, UConn Health Center. Dr. Chen’s research mainly focuses on large-scale multivariate statistical learning, statistical machine learning, and healthcare analytics. He has extensive interdisciplinary research experience in several fields, including ecology, biology, agriculture, and population health. Dr. Chen has been supported by NSF for developing integrative learning methods and studying model/data heterogeneity. In recent years, his team has been supported by NIH for developing data-driven suicide prevention frameworks that leverage data from disparate sources in healthcare systems. Dr, Chen has graduated 11 PhD students, and they have won 13 times paper/poster awards or honorary mentions from major student paper competitions in statistics and data science. Chen is a Fellow of the American Statistical Association (ASA) and an Elected Member of the International Statistical Institute (ISI).

Abstract

Drug development is a long and costly process. Developing a new prescription drug that gains marketing approval can take many years and is estimated to cost drugmakers $2.6 billion according to recent studies. Advanced statistical analysis and machine learning hold great promise to significantly accelerate the process of drug discovery, by analyzing data generated in the biomedical domain such as bioassays, chemical experiments, and biomedical literature. Real world data (RWD), such as electronic health records (EHR), contain routinely collected practice based evidence for real world patients and thus encode insights about efficacy and safety of treatments which are invaluable for drug development. However, RWD are really massive, which poses great challenges for computational analysis. In this short course, we will provide an overview to where and how RWD can help with drug development, key techniques and methodological advancements for analyzing RWD, as well as challenges and future directions. This short course can serve as introduction materials for data scientists interested in RWD analysis for drug development as well as drug development practitioners interested in relevant machine learning techniques.

Introduction to Combining Information for Causal Inference

Instructors: Dr. Issa Dahabreh & Dr. Sarah Robertson

Dr. Issa Dahabreh is an Associate Professor in the Departments of Epidemiology and Biostatistics at the Harvard T.H. Chan School of Public Health and part of the CAUSALab. His research interests include the evaluation of methods for drawing causal inferences from observational data, generalizing the results of randomized trials to new target populations (in which no experiments can be conducted), and synthesizing evidence from diverse sources.

Dr. Sarah Robertson is a Postdoctoral Research Fellow in the CAUSALab and Department of Epidemiology at the Harvard T.H. Chan School of Public Health. Her research interests focus on causal inference methods that combine information to learn about a target population of interest. She is currently working to develop methods that can inform policy decisions by integrating multiple sources of data to estimate the comparative effectiveness of lung cancer screening.

Abstract

Students will learn concepts and methods for

- transportability analyses that extend causal inferences from one or more randomized trials to a new target population;

- external comparisons between an intervention examined in a single-group or comparative experimental study versus other interventions not examined in the experimental study;

- indirect comparisons of different experimental treatments evaluated in separate trials against a common control treatment.

In this course, we will introduce transportability methods for extending inferences about causal effects from a randomized trial to a target population (e.g., the population for which approval is sought for the experimental treatment in the trial). We will also show how the methods can be extended to conduct rigorous external comparisons between the experimental treatment in the trial and other treatments not evaluated in the trial, and indirect comparisons of two experimental treatments evaluated in two separate trials against a common control treatment. We will first discuss study designs and data structures for these analyses using examples from diverse clinical areas. We will then discuss the assumptions required to estimate causal effects in the target population and perform external/indirect comparisons. We will cover different statistical methods for estimating causal effects in transportability analyses, including weighting, outcome modeling (g-formula), and doubly robust methods. We will discuss how doubly robust estimators can incorporate machine learning methods to reduce model misspecification.

Learning objectives

After the completion of this course, participants will be able to:

- understand and evaluate the plausibility of the assumptions required for transportability analyses for extending inferences from a clinical trial to a target population; for using external comparator arms; and for indirect comparisons;

- appreciate the advantages and disadvantages of different methods (e.g., weighting, outcome modeling, and doubly robust methods) for conducting these analyses.

References

Handouts will be provided. Examples of our work related to the course:

- Dahabreh IJ, Robertson SE, Tchetgen EJ, Stuart EA, Hernán MA. Generalizing causal inferences from individuals in randomized trials to all trial-eligible individuals. Biometrics. 2019. Jun;75(2):685-694. doi: 10.1111/biom.13009. Epub 2019 Jun 21. PMID: 30488513.

- Dahabreh IJ, Robertson SE, Stuart EA, Hernán MA. Extending inferences from a random- ized trial to a new target population. Statistics in Medicine. 2020 Jun 30;39(14):1999-2014. doi: 10.1002/sim.8426. Epub 2020 Apr 6. PMID: 32253789.

- Dahabreh IJ, Petito LC, Robertson SE, Hernán MA, Steingrimsson JA. Toward causally interpretable meta-analysis: transporting inferences from multiple randomized trials to a new target population. Epidemiology. 2020. May;31(3):334-344. doi: 10.1097/EDE.0000000000001177. PMID: 32141921.

Statistical Topics in Outcomes Research: Patient-Reported Outcomes, Meta-Analysis, and Health Economics

Instructors: Dr. Joseph C. Cappelleri & Dr. Thomas Mathew

Joseph C. Cappelleri, PhD, MPH, MS is an executive director in the Statistical Research and Data Science Center at Pfizer Inc. He earned his M.S. in statistics from the City University of New York (Baruch College), Ph.D. in psychometrics from Cornell University, and M.P.H. in epidemiology from Harvard University. As an adjunct professor, Dr. Cappelleri has served on the faculties of Brown University, University of Connecticut, and Tufts Medical Center. He has delivered numerous conference presentations and has published extensively on clinical and methodological topics, including on regression-discontinuity designs, meta-analyses, and health measurement scales. He is lead author of the book Patient-Reported Outcomes: Measurement, Implementation and Interpretation and has co-authored or co-edited three other books (Phase II Clinical Development of New Drugs, Statistical Topics in Health Economics and Outcomes Research, Design and Analysis of Subgroups with Biopharmaceutical Applications). Dr. Cappelleri is past president of the New England Statistical Society, elected Fellow of the American Statistical Association (ASA), elected recipient of the Long-Term Excellence Award from the Health Policy Statistics Section of the ASA, and elected recipient of the ISPOR Avedis Donabedian Outcomes Research Lifetime Achievement Award.

Joseph C. Cappelleri, PhD, MPH, MS is an executive director in the Statistical Research and Data Science Center at Pfizer Inc. He earned his M.S. in statistics from the City University of New York (Baruch College), Ph.D. in psychometrics from Cornell University, and M.P.H. in epidemiology from Harvard University. As an adjunct professor, Dr. Cappelleri has served on the faculties of Brown University, University of Connecticut, and Tufts Medical Center. He has delivered numerous conference presentations and has published extensively on clinical and methodological topics, including on regression-discontinuity designs, meta-analyses, and health measurement scales. He is lead author of the book Patient-Reported Outcomes: Measurement, Implementation and Interpretation and has co-authored or co-edited three other books (Phase II Clinical Development of New Drugs, Statistical Topics in Health Economics and Outcomes Research, Design and Analysis of Subgroups with Biopharmaceutical Applications). Dr. Cappelleri is past president of the New England Statistical Society, elected Fellow of the American Statistical Association (ASA), elected recipient of the Long-Term Excellence Award from the Health Policy Statistics Section of the ASA, and elected recipient of the ISPOR Avedis Donabedian Outcomes Research Lifetime Achievement Award.

Thomas Mathew, PhD, is Professor, Department of Mathematics & Statistics, University of Maryland Baltimore County (UMBC). He earned his PhD in statistics from the Indian Statistical Institute in 1983, and has been a faculty member at UMBC since 1985. He has delivered numerous conference presentations, nationally and internationally, and has published extensively on methodological and applied topics, including cost-effectiveness analysis, bioequivalence testing, exposure data analysis, meta-analysis, mixed and random effects models, and tolerance intervals. He is the co-author of two books Statistical Tests in Mixed Linear Models and Statistical Tolerance Regions: Theory, Applications and Computation, both published by Wiley. He has served on the Editorial Boards of several journals, and is currently an Associate Editor of the Journal of the American Statistical Association, Journal of Multivariate Analysis, and Sankhya. Dr. Mathew is a Fellow of the American Statistical Association, and a Fellow of the Institute of Mathematical Statistics. He has also been appointed as Presidential Research Professor at his campus.

Thomas Mathew, PhD, is Professor, Department of Mathematics & Statistics, University of Maryland Baltimore County (UMBC). He earned his PhD in statistics from the Indian Statistical Institute in 1983, and has been a faculty member at UMBC since 1985. He has delivered numerous conference presentations, nationally and internationally, and has published extensively on methodological and applied topics, including cost-effectiveness analysis, bioequivalence testing, exposure data analysis, meta-analysis, mixed and random effects models, and tolerance intervals. He is the co-author of two books Statistical Tests in Mixed Linear Models and Statistical Tolerance Regions: Theory, Applications and Computation, both published by Wiley. He has served on the Editorial Boards of several journals, and is currently an Associate Editor of the Journal of the American Statistical Association, Journal of Multivariate Analysis, and Sankhya. Dr. Mathew is a Fellow of the American Statistical Association, and a Fellow of the Institute of Mathematical Statistics. He has also been appointed as Presidential Research Professor at his campus.

Outline

Based in part on the recently published co-edited volume Statistical Topics in Health Economics and Outcomes Research (Alemayehu et al.), this short course recognizes that, with ever-rising healthcare costs, evidence generation through health economics and outcomes research (HEOR) plays an increasingly important role in decision-making about the allocation of resources. This course highlights three major topics related to HEOR, with objectives to learn about 1) patient-reported outcomes, 2) analysis of aggregate data, and 3) methodological issues in health economic analysis. Key themes on patient-reported outcomes are presented regarding their development and validation: content validity, construct validity, and reliability. Regarding analysis of aggregate data, several areas are elucidated: traditional meta-analysis, network meta-analysis, assumptions, and best practices for the conduct and reporting of aggregated data. For methodological issues on health economic analysis, cost-effectiveness criteria are covered: traditional measures of cost-effectiveness, the cost-effectiveness acceptability curve, statistical inference for cost-effectiveness measures, the fiducial approach (or generalized pivotal quantity approach), and a probabilistic measure of cost-effectiveness. Illustrative examples are used throughout the course to complement the concepts. Attendees are expected to have taken at least one graduate level course in statistics.

Learning objectives

To understand and critique the major methodological issues in outcomes research on the development and validation of patient-reported outcomes, traditional meta-analysis and network meta-analysis, and health economic analysis.

References

- Alemayehu D, Cappelleri JC, Emir B, Zou KH (editors). Statistical Topics in Health Economics and Outcomes Research. Boca Raton, Florida: Chapman & Hall/CRC Press. 2017.

- Bebu I, Luta G, Mathew T, Kennedy TA, Agan BK. Parametric cost-effectiveness inference with skewed data. Computational Statistics and Data Analysis. 2016; 94:210–220.

- Bebu I, Mathew T, Lachin JM. Probabilistic measures of cost-effectiveness. Statistics in Medicine. 2016; 35:3976-3986.

- Cappelleri JC, Zou KH, Bushmakin AG, Alvir JMJ, Alemayehu D, Symonds T. Patient-Reported Outcomes: Measurement, Implementation and Interpretation. Boca Raton, Florida: Chapman & Hall/CRC Press. 2013.

Statistical Translation of Extrapolation: demonstrating efficacy and safety of investigational medicines in pediatric populations

Instructors: Dr. James Travis, Dr. Margaret (Meg) Gamalo & Dr. Jingjing Ye

James Travis is a statistical team leader in the Office of Biostatistics within the Center for Drug Evaluation and Research at the US Food and Drug Administration and leads the team supporting the Division of Pediatric and Maternal Health. James joined the Agency in 2014 following completion of his PhD at the University of Maryland, Baltimore County. He is a representative on the Pediatric Review Committee for the Office of Biostatistics. He has interests in Bayesian methods, particularly the use of informative priors in implementing extrapolation in pediatric clinical trials.

James Travis is a statistical team leader in the Office of Biostatistics within the Center for Drug Evaluation and Research at the US Food and Drug Administration and leads the team supporting the Division of Pediatric and Maternal Health. James joined the Agency in 2014 following completion of his PhD at the University of Maryland, Baltimore County. He is a representative on the Pediatric Review Committee for the Office of Biostatistics. He has interests in Bayesian methods, particularly the use of informative priors in implementing extrapolation in pediatric clinical trials.

Margaret (Meg) Gamalo, PhD is Head of Statistics –Inflammation and Immunology, Pfizer Innovative Health. She combines expertise in biostatistics, regulatory and adult and pediatric drug development. She recently was a Research Advisor, Global Statistical Sciences at Eli Lilly and Company and prior to that was a Mathematical Statistician at the Food and Drug Administration. Meg leads the Pediatric Innovation Task Force at the Biotechnology Innovation Organization. She also actively contributes to research topics within the European Forum for Good Clinical Practice – Children’s Medicine Working Party. Meg is Editor-in-Chief of the Journal of Biopharmaceutical Statistics and is actively involved in many statistical activities in the American Statistical Association. She received her PhD in Statistics from The University of Pittsburgh and master’s in applied mathematics from the University of the Philippines.

Margaret (Meg) Gamalo, PhD is Head of Statistics –Inflammation and Immunology, Pfizer Innovative Health. She combines expertise in biostatistics, regulatory and adult and pediatric drug development. She recently was a Research Advisor, Global Statistical Sciences at Eli Lilly and Company and prior to that was a Mathematical Statistician at the Food and Drug Administration. Meg leads the Pediatric Innovation Task Force at the Biotechnology Innovation Organization. She also actively contributes to research topics within the European Forum for Good Clinical Practice – Children’s Medicine Working Party. Meg is Editor-in-Chief of the Journal of Biopharmaceutical Statistics and is actively involved in many statistical activities in the American Statistical Association. She received her PhD in Statistics from The University of Pittsburgh and master’s in applied mathematics from the University of the Philippines.

Dr. Jingjing Ye is an executive director and currently leads a global team, Data Science and Operational Excellence (DSOE), with Global Statistics and Data Sciences (GSDS) in BeiGene. She has over 16 years experience in pharmaceutical industry and US FDA, with focus in cancer drug discovery and development. Her statistical and regulatory experience expands full spectrum on patients’ treatment journey from diagnosis, treatment to living with the condition. Before BeiGene, she was most recently a statistics team leader in the Office of Biostatistics in CDER. At CDER, she supervised a team of statistical analysts and reviewers for designing, reviewing and analyzing clinical trials to support drug approvals throughout preIND, IND, NDA/BLA and post-approval studies in oncology and hematology. She was statistical representative within the Oncology Center of Excellence (OCE) Pediatric Review sub-committee, responsible for overseeing all pediatric review operations within the OCE.

Dr. Jingjing Ye is an executive director and currently leads a global team, Data Science and Operational Excellence (DSOE), with Global Statistics and Data Sciences (GSDS) in BeiGene. She has over 16 years experience in pharmaceutical industry and US FDA, with focus in cancer drug discovery and development. Her statistical and regulatory experience expands full spectrum on patients’ treatment journey from diagnosis, treatment to living with the condition. Before BeiGene, she was most recently a statistics team leader in the Office of Biostatistics in CDER. At CDER, she supervised a team of statistical analysts and reviewers for designing, reviewing and analyzing clinical trials to support drug approvals throughout preIND, IND, NDA/BLA and post-approval studies in oncology and hematology. She was statistical representative within the Oncology Center of Excellence (OCE) Pediatric Review sub-committee, responsible for overseeing all pediatric review operations within the OCE.

She is currently the co-lead on several working groups, including ASA Biopharmaceutical Section (BIOP) Pediatric Working Group (pediatric extrapolation sub-team), ASA BIOP Statistical Considerations in Oncology Pediatric Trials subgroup under ASA BIOP Stats Methods in Oncology working group, and DIA-ASA Master Protocol Patient Engagement Sub-team. She received her PhD degree in statistics from University of California, Davis and B.S. in Applied Mathematics from Peking University in China.

Abstract

Pediatric drug development often faces substantial challenges, including economic, logistical, technical, and ethical barriers, among others. Pediatric drug development lags adult development by about 8 years and often faces infeasibility of trials, resulting in children being a large, underserved population of "therapeutic orphans," as an estimated 80% of children are treated off-label (Mulugeta et al. in Pediatr Clin 64(6):1185-1196, 2017). Among many efforts to mitigate these feasibility barriers and as an ethical approach to minimizing exposing pediatric patients to the research risks, increased attention has been given to extrapolation; that is, the leveraging of available data from adults or older age groups to draw conclusions for the pediatric population. Recent ICH E11A harmonization on a pediatric extrapolation framework provides a clearer path forward for pediatric drug development programs leveraging some degree of extrapolation despite uncertainties. In this framework, the degree to which extrapolation can be used lies along a continuum representing the uncertainties to be addressed through generation of new pediatric evidence (Gamalo et al. 2021).

Recognizing the challenges in pediatric drug development, in 2018, the ASA Biopharmaceutical Section Pediatric Working Group (SPDPx) was chartered to serve as a forum for development, education and dissemination of statistical research and innovation, and a forum for interchange of meaningful scientific opinion across multiple disciplines to accelerate development of medicines for children. Within the SPDPx, a sub-team on pediatric extrapolation was formed to specifically focus on scientific necessity through efficient and robust innovative trial designs and analytic strategies. In this short-course, instructors from the SPDPx will provide a combination of pediatric drug development process and statistical methodologies in both efficacy and safety evaluations.

Outline of the tutorial

- Part I: Pediatric drug development overview: regulatory history and ICH guidelines, including extrapolation strategy, process, concept, and plan.

- Part II: The Statistical Methodologies in Pediatric Extrapolation Framework, including pediatric extrapolation source and target population, Bayesian framework and methodologies, examples and available statistical software.

- Part III: The extend of development relating to safety and assessment of safety in pediatric patients

Instructors' background

The instructors have given the short course in Deming Conference 2022. All of the instructors are the active members of the ASA Biopharmaceutical Section Pediatric Working Group (SPDPx) since 2018. James and Meg are co-leads of SPDPx. Jingjing Ye is the lead on the pediatric extrapolation sub-team within the SPDPx.